This is my attempt to distill the lore of bufferbloat, and describe how you can mitigate it on your network. It seems an appropriate inauguration post for my once-again resurrected blog.

Symptoms

Saturation of network links causes massive amounts of latency and slowdown, particularly on slow uplinks of asymmetric residential internet service (however, it can and does occur on the downlinks of the same). Network applications doing big bulky downloads can cause lots of latency on the network, causing real-time applications (VOIP, gaming, or SSH) to lag badly or outright fail.

Principles

(this is a refresher on certain fundamentals of how the Internet works; you can skip this bit if you already know the gist of it)

Internet networks are fundamentally packetized, and they do not guarantee packet delivery. These properties greatly simplify switching and multiplexing of multiple traffic flows on the same network. As such, network protocols, if they wish to guarantee data arrival, must make their own arrangements (typically with acknowledgement messages and retransmits).

TCP is a protocol that endeavours to present a pipe-like streaming interface to applications that care about content delivery and not about real-time latency (HTTP being one of the most famous users of it). As it must do so on top of a path on the packetized network, with separate pipes between each hop, it needs to arrange for an appropriate amount of data to be “in flight” in packets travelling across the network. If it naïvely waited for acknowledgements for single packets, much of the network capacity between the endpoints would sit empty as that single packet traverses all of the hops. Thus, it implements a congestion control “windowing” arrangement in an attempt to detect the appropriate amount of data to have in flight for optimum transfer speed. This is why download speeds start slow and grow to some maximum.

Network hardware (switches, modems, adapters, and even software drivers for those adapters) maintain buffers of packets in order to avoid momentary packet losses endemic or other implementation details specific to their physical layer, and the statistic probability of packets to occasionally stack in packet networks.

Pathology of Bufferbloat

Many network hardware designers merely assumed that more is better and added huge first-in-first-out buffers to many of their devices. However, the problem of how much buffer to include in a device (and how to prioritize packets in it) for best effect proved to be non-trivial. What was not well appreciated by the hardware designers was that packet networks must forward a packet promptly or drop it. Without that core assumption, the higher level protocols cannot operate.

Quite simply, these giant, unmanaged buffers are prone to carrying a balance rather than merely temporarily absorbing statistical transients, and thus packets (even those of very latency sensitive protocols, like gaming) can find themselves badly delayed as the buffer empties at the rate the network device can transmit the packets. Protocols like TCP, without being able to know otherwise, can inadverdently pump these buffers full (particularly at any bottlenecks) across the network as they attempt to optimize the amount of in-flight data by pipe size. In short, excess queuing of packets in these buffers make the network path seem longer than it is.

Thus, bufferbloat is the cause of most of the symptoms of network congestion.

How it tends to happen at edge (read: your) networks

Because this tends to occur at bottlenecks, the upstream and downstream links to your ISP (in that order) are the likeliest places for bufferbloat to appear in general use of the global network. However, fast, local transfers between two stations on your network can cause bufferbloat to occur directly on your local WiFi or Ethernet devices.

How it’s getting solved

The problem has been noticed for many years, although the pathology has only become well understood and publicized in the last few. Anyone who has set the upload limit on their BitTorrent client to just shy of their upstream bandwidth to their ISP was trying to counteract the effects of bufferbloat.

ISPs, dismayed at the bad congestion causing service interruptions for many of their customers (and not just those running bandwidth-expensive applications), implemented much-maligned “traffic shaping” regimes that restricted traffic identified as a specific protocol to certain speeds, trying to do the same. This was exacerbated by the fact that they oversubscribed themselves, knowing that most customers don’t use the full capacity of their links, or only for short periods.

That approach was worse than ineffective, in that not only did it fail to solve the root problem of delays induced by saturated buffers, it incentivized any self-interested party on the network (and, by extension, the designers implementing the software and protocols they’re using) to come up with technological contrivances (BitTorrent encryption, dancing their TCP port numbers, and so on) to obfuscate their traffic to make it escape the shapers’ heuristics.

There have been various abortive attempts at creating Active Queue Management strategies such as RED. However, most of them require the use of various tuning knobs and/or being informed of congestion adjacent on the network with ECN. With all of the additional complexity, failure cases, and overhead that implies, it’s been difficult to implement and is not well suited to plug and play hardware devices.

Around early 2012, Kathleen Nichols and Van Jacobson announced a new

AQM algorithm they entitled

CoDel, which drops

packets from buffers when the minimum time spent by any packet in the

queue of the device exceeds some generally appropriate preset value,

100ms by default. This means that it permits buffers to fulfill their

original function of smoothing statistical spikes (“good” queue) but

prevents any perpetual queuing in the buffers (“bad” queue). It

doesn’t require any configuration, nor does it require notifications

from other hops. It’s simple to implement (mostly, for there can be

multiple layers of buffers in both hardware and software which also

need to be similarly managed), even for hardware. Certain link

layers, like Wifi, are more complex and require trickier

implementation, but they’re coming along. Linux already has it

(fq_codel in the kernel and support in the tc utility), at least

for wired Ethernet. Check out

CeroWrt for a router

firmware project (forked from OpenWrt) that

implements CoDel (and other techniques) for certain models of the

Netgear WNDR3700/3800. The work is getting upstreamed back into

OpenWrt and other router firmware projects, so CoDel is now there, too

(12.09 and later, when you enable the QoS feature, automatically

enable CoDel).

How to mitigate modem-side bloat on your home Internet connection

While CoDel solves the problem of bad buffering in each device’s own buffers, bad buffering in unfixed adjacent devices is not affected. As such, in the case of the residential setup, ensuring that CoDel is active on your computers and your router is insufficient for dealing with bad buffering in the modem and the CO headend of your ISP. Both cable and DSL modems are notorious for having inappropriately sized buffers. As they are hardware, you’re basically limited to waiting for better gear to show up.

This is particularly unfortunate, because in general residential use, the bottleneck of the Internet connection itself (particularly upstream) is the most common place for bufferbloat to occur.

To demonstrate, I load up my upstream with TCP:

1

| |

Saturating your downstream will produce similar results, although because of the asymmetric nature of residential service (downstream is often an order of magnitude faster) it is harder.

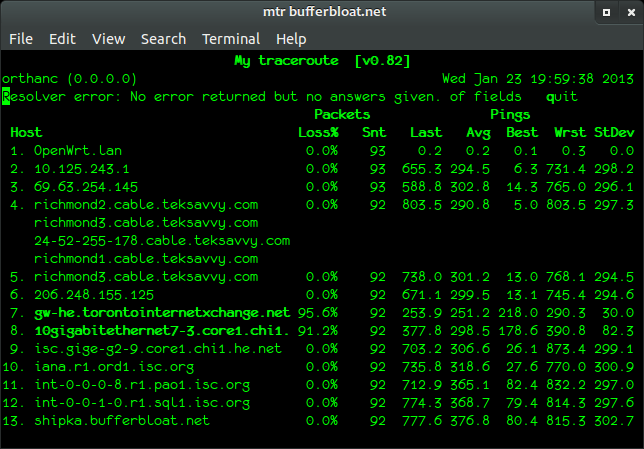

Then, I run the excellent mtr program against some other site out on the Internet, in order to measure the round trip time for pings to each hop:

Gross. More than half a second of lag at the first hop inside the ISP and by extension anywhere beyond. Any gaming or VOIP would be a total goner here, and browsing would get decidedly laggy. In this circumstance (upstream is bloated), the delay is introduced during departure and not return. Notice that except for those two weird hops between Hurricane Electric and TORIX, there is no loss at all. Huge lag with no packet loss is characteristic of bufferbloat.

All hope is not lost, however. You can ameliorate modem-side bloat by configuring the global bandwidth limiting function on your router software to limit throughput to the Internet to the (observed, or least advertised) link rates of both upstream and downstream of your connection to your ISP. The limiter works by watching the throughput across the interface and arbitrarily dropping packets if the throughput exceeds the limit. As this procedure thus applies both send and receive, it means that you’re able to convince senders both inside and outside your network to back off, preventing them from pumping up the rogue buffers. While this approach is clearly not ideal – the settings must match the actual link rates of the two streams of your ISP connection and you probably lose any benefit of the buffers themselves – it does a fair job of mitigating the hidden modem bloat. I’m quite happy with it.

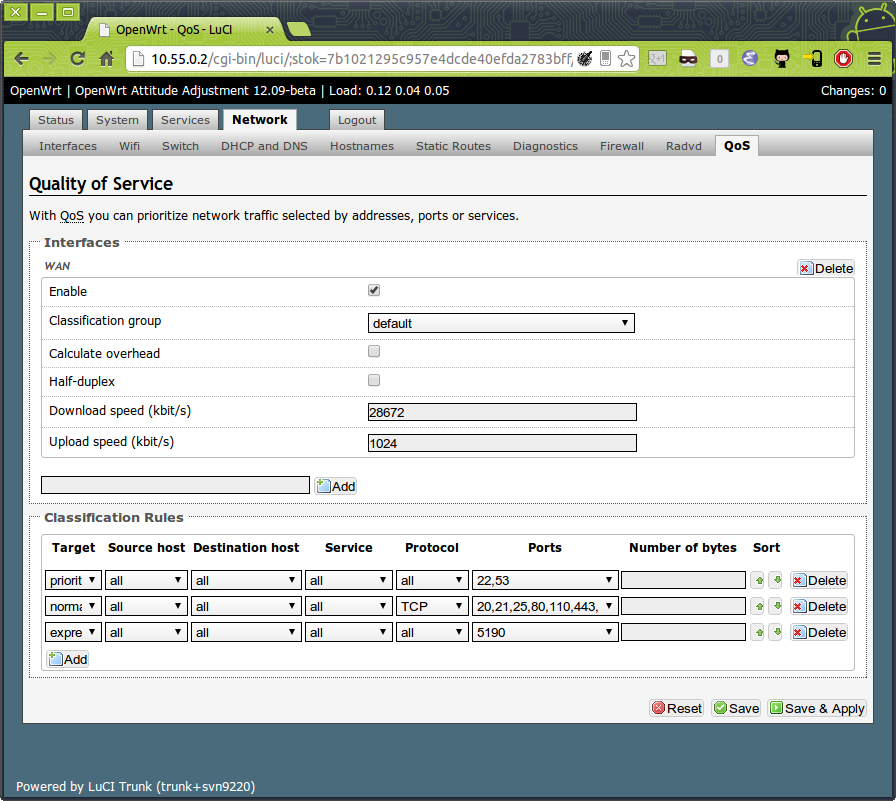

In this example, I’m running OpenWrt and added

the luci-app-qos package. I’ve taken 28 (my ISP’s advertised

downstream link rate, in megabits) and 1 (ISP’s upstream link rate, in

megabits) and multiplied them by 1000 to get the value in kilobits/s.

This sets a single Linux HFSC traffic shaping group for all traffic (with the two rates for upstream and downstream) just under the capacities of your modem’s link rates.

Notice the presence of the traffic classification rules, which are setting SSH and DNS as high priority. These came as default with Openwrt’s QoS package. While router owners have tried to use such rules for years to prioritize latency-sensitive traffic in an attempt to deal with the then-unnamed problem of bufferbloat, it’s ineffective. For example, after I had set the limits and began testing the system by saturating upstream with SSH, oblivious of the default rule prioritizing SSH, latency for unprioritized traffic remained excellent. The purpose of these rules is more to allocate a greater portion of pipe size to certain traffic, rather than the default behaviour of things like TCP streams to more or less divide pipe size evenly between them. In short: folks who think they want classification shaping rules (ie., because they have latency problems) probably don’t and actually need to address bufferbloat issues instead.

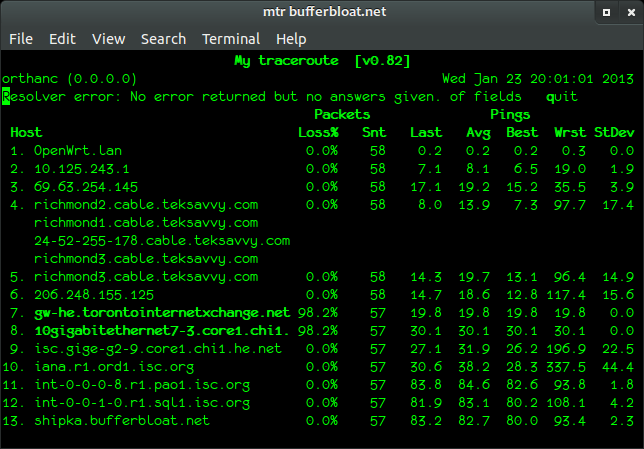

The results after applying the limits, after starting my upload back up again:

Much better (please ignore those two weird hops). Indistinguishable from when no upload is happening at all.

However, it is worth reiterating that this this technique will have no effect on local bufferbloat happening between stations or between a station and the router box. This can happen if you’re transferring files between two local boxes at full speed, particularly on wireless. By far the best solution here is full deployment of CoDel (including the messy details of making it work on wireless) on all of the local devices. Sadly, it may be some time yet before consumer wireless devices and Windows implement it. Thankfully, the most commonly encountered form on home Internet connectivity (ie., modem/ISP bloat) can be ameliorated with the bandwidth limit.

TL; DR

Bufferbloat…

- … is caused by oversized or poorly managed buffers in network devices that hold a constant queue of packets entering and leaving at the link rate of the connection.

- … tends to appear at bottlenecks, like your cable modem (especially upstream).

- … is best fixed by ensuring that buffers don’t “carry a balance” of “bad queue”, and that they are only for eating transient spikes. Fancy throttling or prioritization rules are not effective. CoDel is the best bet for this so far, and software and hardware vendors are already making improvements.

- … in your modem and ISP can be mitigated in the meantime by setting your QoS bandwidth limits in your router box to the linkspeeds of your ISP.

Further reading

- Kathleen Nichols and Van Jacobson’s ACM paper describing CoDel

- bufferbloat.net

- Video: Jim Gettys – Bufferbloat: Dark Buffers in the Internet

- CeroWrt - a branch of OpenWrt created as a testbed for bufferbloat solutions

- Jim Gettys’ blog

I hope this has proved useful. Enjoy!